Deep Dive into Duplicate Suppression

Author: Dee Luo, Product Manager

Date: July 2020

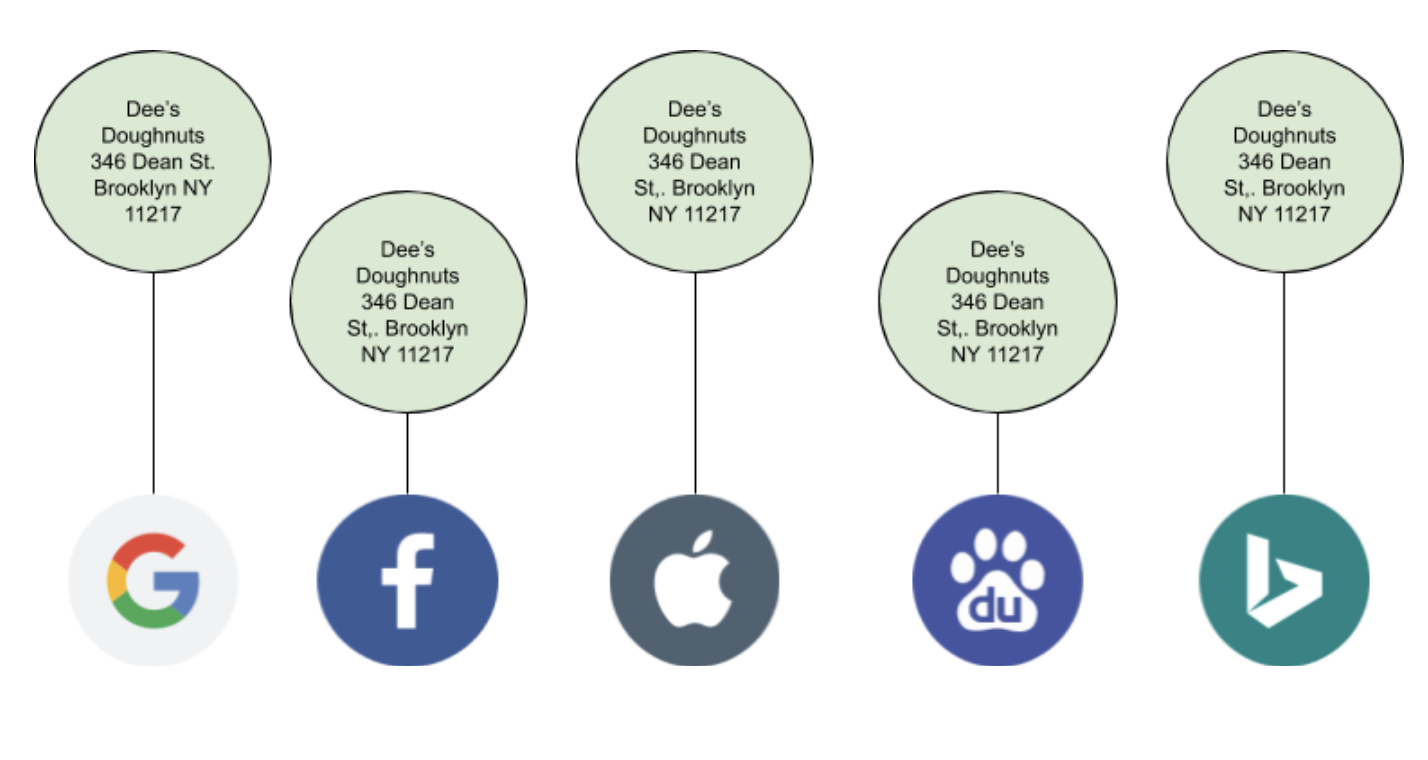

Brands know the importance of having accurate information across all the apps, maps, and directories where consumers are searching for information. In a perfect world, powering that brand data and managing each of these listings would be enough to ensure that consumers consistently get the answers they're searching for.

Our publishers, however, can receive signals and publish data coming from a number of different sources. More realistically, search results can often look like this:

Although the accurate listings exist, there are additional rogue listings that look like they could also represent the business and ultimately clutter results. This then becomes a guessing game for consumers — which listing is the correct listing? We call these rogue listings "duplicate listings."

Downfalls of Duplicate Listings

Once duplicate listings start to appear in search results, consumers then have to make a decision on which listing they trust. This can be extremely detrimental to the consumer experience if the information is outdated or conflicting. Is the store location open or closed? Which phone number should they call? Not only can these listings cause customer confusion with inaccurate information, but they can also take away valuable user engagement from your correct listings, such as reviews and engagement metrics. Even worse, conflicting data for your brand can drive consumers to instead engage with competitor listings.

It's important to note that duplicates don't just impact the consumer experience — they also affect the publisher's search experience. Which listing should the publisher feature in search results? If publishers are getting inconsistent data, they're likely to feel less confident in your true listing and therefore, less likely to show your brand in search.

Where exactly do these duplicates come from? Common business updates like rebrands or updates to an address can sometimes cause some data sources, like aggregators, to erroneously create new listings when they aren't able to process updates immediately. Other times, store managers may find that ex-employees had previously created their own listings that current employees no longer have access to. It's hard to know exactly when duplicate listings get created and even more impossible to keep track of them all.

To add fuel to the fire, having duplicate listings removed can be a slow and cumbersome ordeal. Some sites like Google allow users to suggest edits to incorrect information or request to claim the listing to take full control of the data, but those changes can take weeks to process if even at all. For sites that don't offer these options, the best you can hope for is to send an email asking to take the duplicate listing down. Now imagine repeating that process for tens, hundreds, maybe even thousands of duplicate listings that potentially exist across the web.

We've built Yext's Duplicate Suppression technology to ensure that duplicates can be identified and managed at scale. With custom-built publisher integrations, Yext can proactively search sites for duplicate listings, surface potential duplicates to brands for review, and suppress duplicate listings in real-time – all based on each publisher's best practices.

Proactively Search Sites

Yext issues a call to each publisher's Search API at a scheduled cadence to identify listings that represent the entity in the publisher's database. Our publishers' Search APIs typically mimic results that would be surfaced when a consumer searches directly in that publisher's interface.

It's best to use several strategies to ensure a thorough search is done to retrieve all potential listings that could represent your business. Depending on the data provided and what the publisher supports, we will use a combination of the following search strategies:

- Location Name + Geocoordinates

- Location Name + Address

- Location Name + Phone Number

- Address + Phone Number

- First Name / Last Name* + NPI

- First Name / Last Name* + Address

- First Name / Last Name* + Phone

*Healthcare Professional entity types allow brands to specify the first name and last name of the practitioner. This is currently not available for other individual practitioner types

Let's say on average we use three search strategies to pull results from each publisher, roughly estimating ~100 publishers that support suppression. That means for one single business location alone, our system performs approximately 300 API calls to identify potential duplicates. To manage this increasingly large queue of requests at scale, we built what we call our Scheduler system.

The Scheduler is responsible for tracking, prioritizing, and kicking off requests to our publisher APIs from many callers throughout the Yext system, including Duplicate Suppression. Since we make millions of calls to our publisher network every day, it is important that we find a balance in prioritizing calls to optimize our performance but also ensuring our volume of requests respects the rate limits and quota demands imposed by our publishers.

While Yext can't prevent duplicate listings from appearing, the Scheduler is constantly running behind the scenes, issuing hundreds of thousands of search calls a day and analyzing search results to ensure new duplicate listings are identified as quickly as possible.

Surface Potential Duplicates for Review

For each listing result brought back from the publisher search calls, we then need to determine the likelihood the listing represents your business location based on data comparisons and distance functions. This step filters out any listings that we confidently know are not a match for that location, narrowing down the pool of potential duplicate listings.

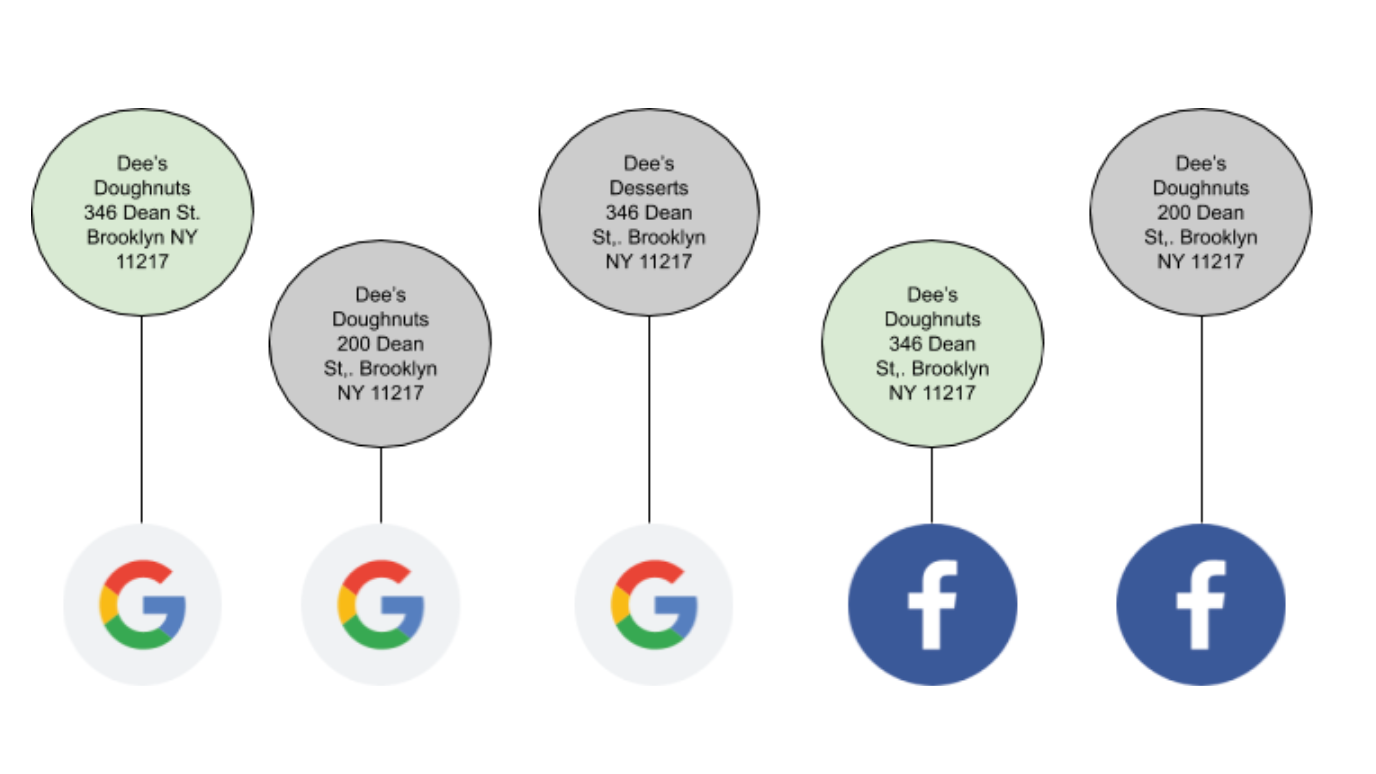

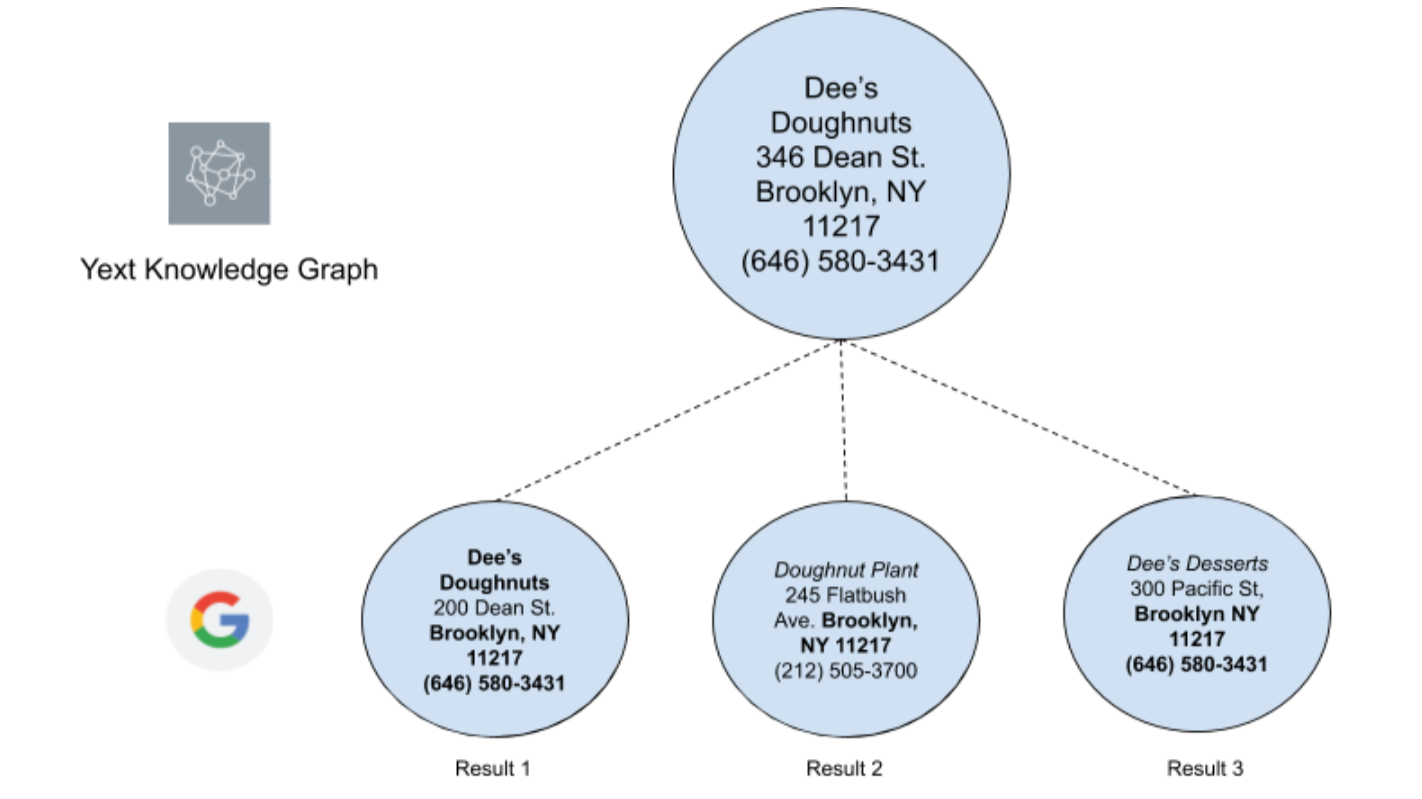

Let's take a look at a simplified example by searching for the business Dee's Doughnuts on Google:

These all look like valid results given the search query, but do these listings actually represent Dee's Doughnuts?

- Result 1: While the addresses do not completely match, the names and phone numbers are identical to the location we are searching for. This is likely a duplicate listing ✓

- Result 2: There is a slight similarity in the business names, but the phone numbers and addresses do not match. This listing likely represents a different business entity ✕

- Result 3: The phone numbers are identical and the names are slightly similar. While this is not as strong of a match as Result 1, the phone match and name similarity is enough to consider this listing a potential duplicate ✓

Once we have identified all possible duplicate listings, brands can review the listings we've found and confirm if they are true duplicates of their entities. In the above example, I know that Dee's Desserts (Result 3) is actually the sister cake shop around the corner from Dee's Doughnuts and can easily mark the listing as "Not a Duplicate".

After a listing is confirmed as a true duplicate, our teams do one final manual review to triple check the duplicate listing should be suppressed. Having this last confirmation step ensures that no listings for completely separate businesses are accidentally taken down.

Suppress Duplicates in Real-Time

Identifying and approving duplicates are actually the most time-consuming steps of suppressing duplicate listings. Once a duplicate is confirmed and approved, the actual suppression process sometimes takes only a matter of seconds.

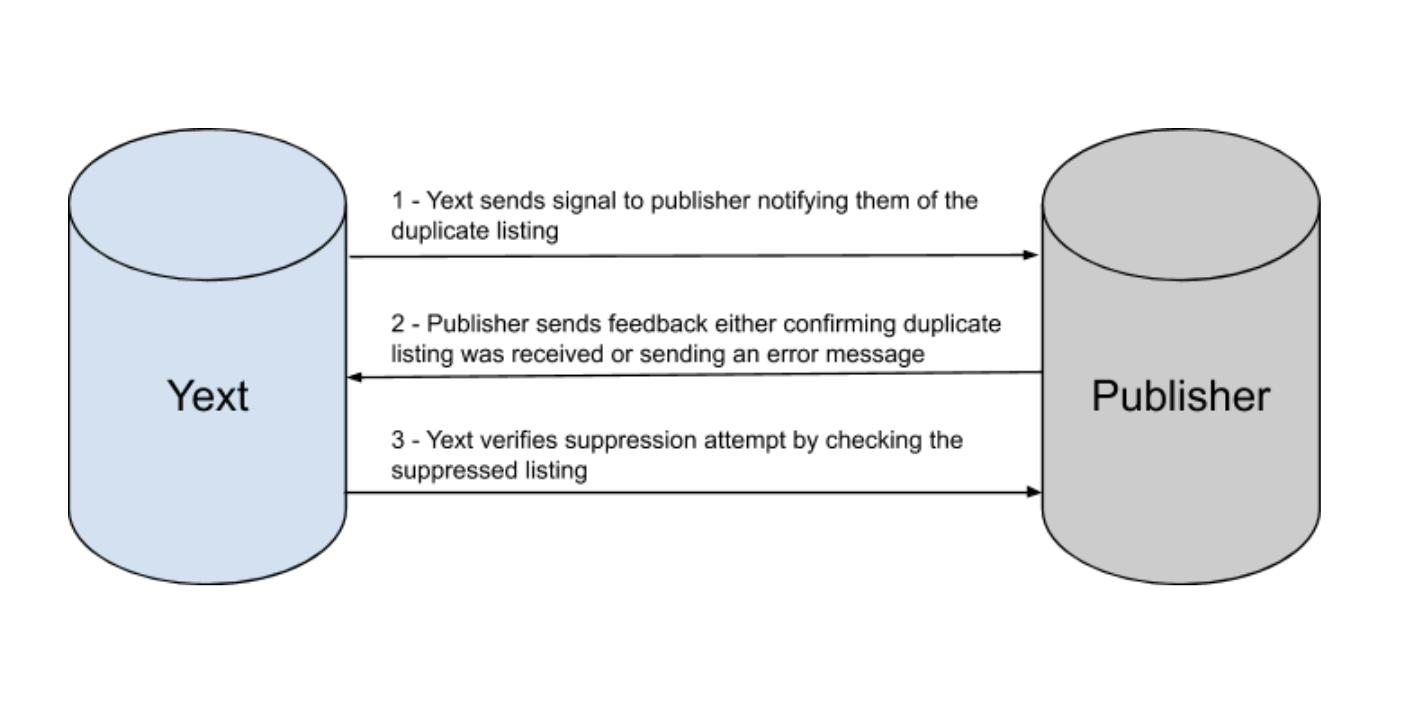

Let's break these three steps down further:

1. Yext sends signal to publisher notifying them of the duplicate listing

Each publisher has its own way of receiving and processing duplicate suppression requests. Some publishers have specific APIs dedicated to suppressing duplicate listings while others are notified of duplicate listings via a data feed on a scheduled cadence. Because of the custom nature of each integration, Yext has dedicated integration teams who build, monitor, and troubleshoot the data delivery process from start to finish.

2. Publisher sends feedback either confirming duplicate listing was received or sending an error message

With an ideal integration, we are able to receive signals back from the publisher on whether or not they successfully ingested our suppression request. This gives us the ability to register any potential errors that would cause the suppression attempt to fail. Similarly to step one, each publisher has its own logic for how to send signals back to us. Not only may the methodology vary (e.g. API response vs. batch receipt ingestion), but publishers may have their own set of validation and error codes to report back any attempt failures. Yext has custom Feedback Handlers built for each integration to manage these incoming signals from publishers.

3. Yext verifies suppression attempt by checking the suppressed listing

What actually happens when a listing is suppressed? Again, the suppression behavior will depend on how the publisher handles suppressed listings in their own system. For example, some publishers will redirect any users who try to visit the suppressed listing to the correct main listing for that location. Others will hide the suppressed listing entirely and give a 404 "page not found error." Many times, the suppressed listing itself is not actually removed from the publisher's database but is instead flagged with a specific status to trigger different behavior when the listing is visited. Keeping the listing at the database level is actually beneficial because it helps publishers keep track of changes to listing records and ensures that the same duplicate listing with outdated data does not reappear.

Regardless of the suppression behavior, once we've received feedback that a listing has been suppressed our systems will attempt to verify the suppression has been successful. Our logic is set up to know the expected suppression behavior of each publisher so we can confidently confirm when a listing has been successfully suppressed. Whether the publisher refers to it as suppressing, deleting, or merging, any duplicate listings that are suppressed in Yext are removed from search results and will no longer be discoverable.

What Happens to Suppressed Listings when Canceling Yext?

Listings that are suppressed will stay suppressed, regardless of if a brand continues to use Yext. Cleaning up duplicate listings is beneficial not just to brands but also to consumers and our publishers themselves. Therefore, Yext will not revert any past clean up when a customer's subscription has ended.

Eliminate Duplicates and Take Control of Your Data

While handling duplicate listings at scale can sound daunting, Yext's Duplicate Suppression technology will do the legwork to make sure those rogue listings are found and taken care of. With over 100 powerful custom-built integrations across the Yext Knowledge Network, Yext helps ensure the data that appears across publishers stays in the brand's control. As duplicate listings come down, search results are decluttered — brand-powered listings can rise to the top and users can discover the consistent and accurate information they've been searching for all along.