Overview of Deployment Process | Yext Hitchhikers Platform

What You’ll Learn

In this section, you will:

- Learn the three phases of the deployment process

- Learn how the process is structured for faster deployment and better performance

Overview

The Pages system has been architected to support building statically generated pages at scale. Most static site generators struggle to build sites with more than thousands of pages and most will require a full site rebuild even with a tiny data change.

The Pages deployment process is optimized for scale and allows you to build sites extremely quickly. Incremental data changes only update the necessary pages resulting in changes going live in less than five seconds in most cases.

This unit will walk through the Pages architecture that allows us to achieve these performance numbers.

The Deployment Process

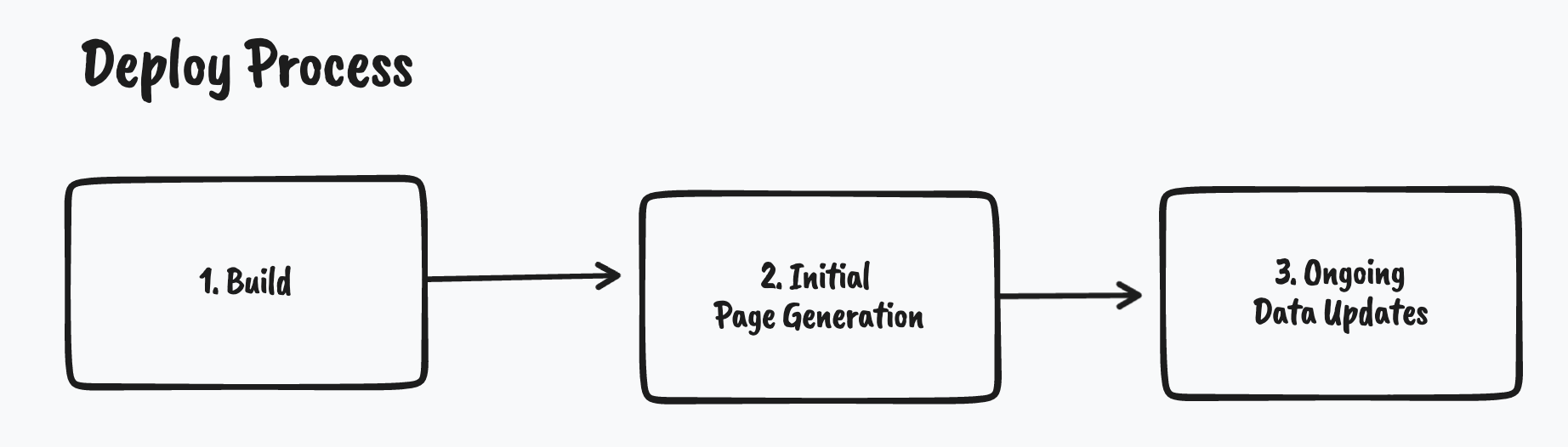

We split the deployment process into three independent phases:

- The initial build

- Page generation

- Ongoing data updates

By decoupling these phases, the system can provide processing environments that are uniquely optimized for distinct responsibilities of each phase, yielding an overall faster deploy performance.

1. Initial Build

The initial build phase is responsible for generating all the static assets that make up the frontend of the website. This includes, but is not limited to, CSS stylesheets, JavaScript files, font files, and images.

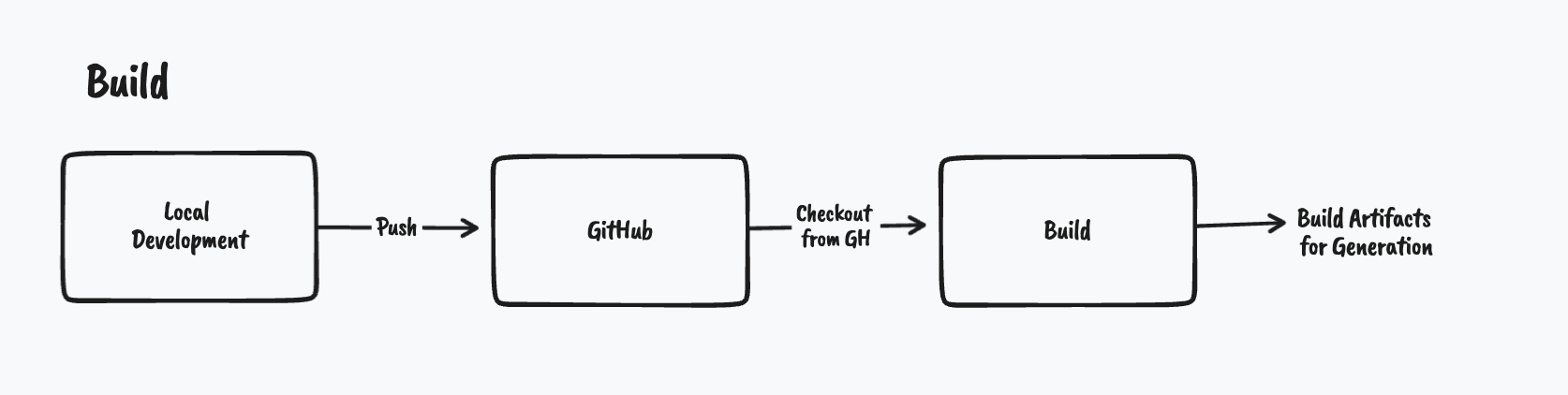

This process is kicked off when a developer pushes code to GitHub.com. This is usually done after performing local development and local testing. After the source code is pushed to GitHub the Yext Pages systems will checkout the code and initiate the build process.

Importantly, the initial build phase does not interact with the data source – it is not responsible for generating web pages based on data records. It is just responsible for generating the “supporting” assets – typically stylesheets and JavaScript files. This split is critical since it allows us to only update the HTML files when data changes rather than restarting the entire build process from scratch.

The exact build process is determined by a build command located within the config.yaml file at the root of the project. This build chain can run any arbitrary node-based tools. Generally, this step will run npm install and then run npm build but this can be configured. You can learn more in the

Site Configuration

reference doc.

After the initial build is completed the initial page generation begins.

2. Initial Page Generation

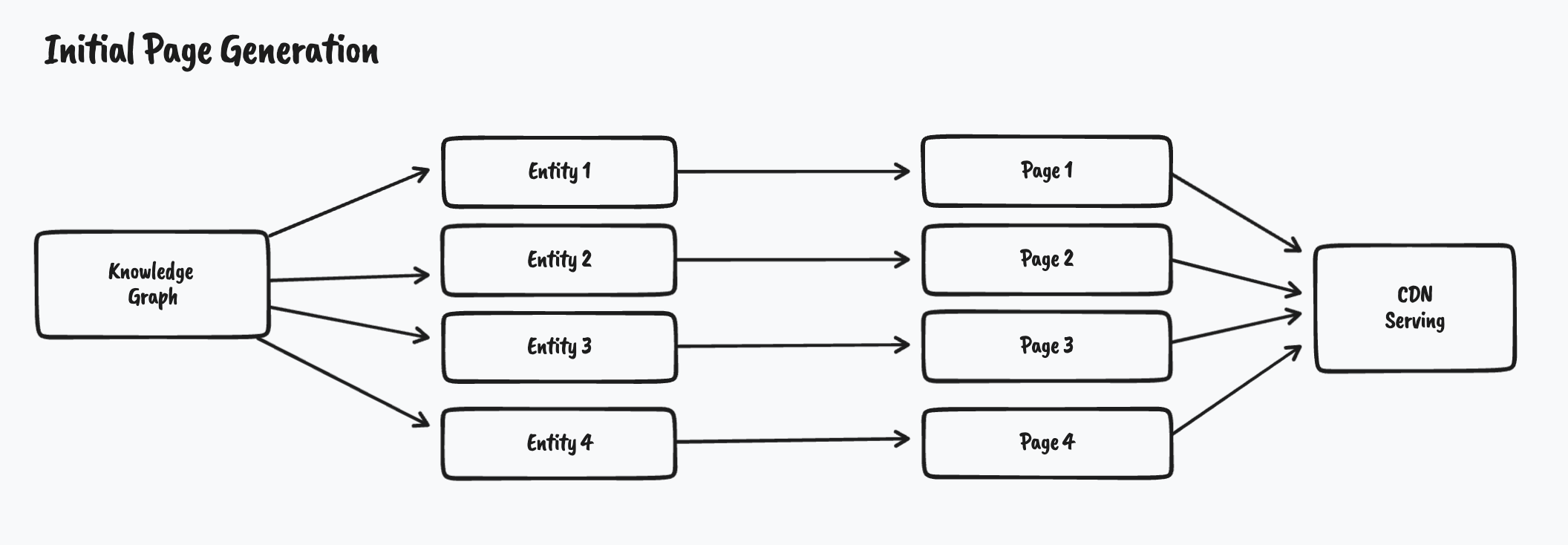

The page generation phase is responsible for generating the HTML web pages that make up the site. These web pages are generated based on data records from the Yext Knowledge Graph. Under the hood, this uses a system called Streams that pushes entities from the Knowledge Graph into Pages.

For every single data record, we will generate an HTML page using the build artifacts from the previous step. After the HTML is generated the files are automatically pushed to our global CDN. Since each page can be generated independently, this process can happen in parallel which greatly improves the generation speed.

After the initial page generation is complete the deploy is live and will be served via the CDN. If data in the Knowledge Graph changes after this generation, those updates are processed incrementally in the next step.

3. Ongoing Data Updates

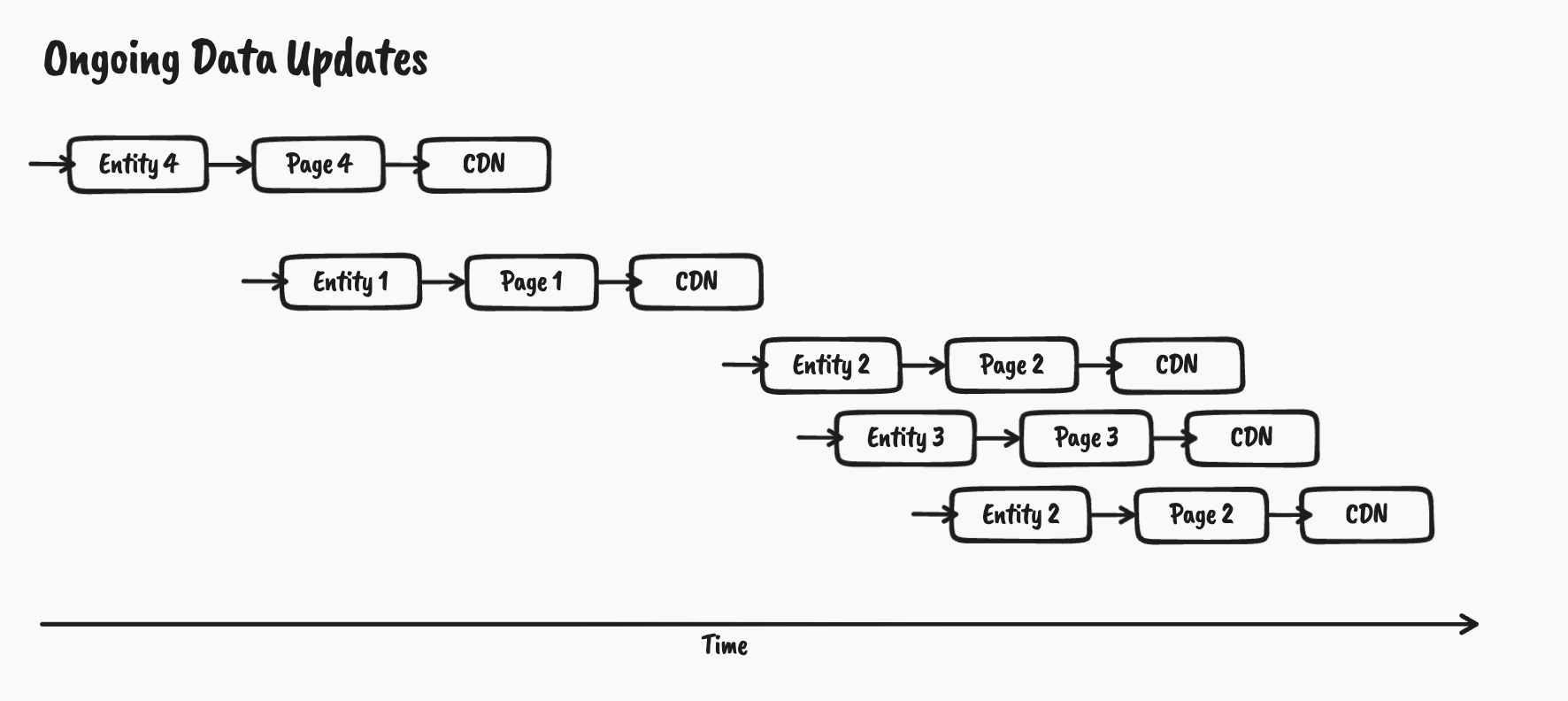

After the initial page generation, the deploy process is not over. The deploy listens for any ongoing data changes via the same streams system used in step 2. If data changes in the Knowledge Graph, the streams system will notify the site to incrementally build any new pages the data change impacts.

Unlike other static site generation systems, this does not kick off a new build from the beginning. Instead, it incrementally generates the HTML for only the pages that have changed. Best of all it can re-use the same build artifacts that were created in step 1 so it doesn’t have to go through the time-consuming build process again. Overall the net result is incremental publishes in less than five seconds.

The below diagram shows this process happening over time as entities in the Knowledge Graph change.

If a ton of updates happen all at once then the Pages system can parallelize these requests to minimize the total processing time.

Wrap Up

The deployment process is managed by the Pages system without additional developer intervention. If changes to the structure of the site are required, the developer just has to push their latest commit to Github. Otherwise, data changes in Knowledge Graph will automatically update the relevant statically-generated pages in real-time. The Pages system takes care of all the complicated details of managing the build artifact, generating HTML and knowing when to re-generate which pages.

Which stage of the Pages deployment process is optimized for scale to allow you to build pages quickly?

What does the Yext user have to do to start the deployment process?

Way to go, you passed! 🏁