Types of QA | Yext Hitchhikers Platform

What You’ll Learn

By the end of this unit, you will be able to:

- List and explain the four types of QA

- Identify the general issues you may face during each type of QA

- Complete the steps of the QA process

Overview

Getting Yext Search integrated on the site’s main domain is very important so that we can make improvements based on real user data. Part of what makes Yext Search such a valuable product is the real-time search analytics, which tell us what people are searching for and how they engage with the content in the experience. This allows us to make data-driven decisions in order to optimize the search experience.

However, it’s also imperative to QA your search experience thoroughly before integrating with the site’s main domain. We want to ensure that all of the client’s top priority queries are returning optimal results so that we have a strong foundation in place before going live. Post-launch enhancements should consist of smaller incremental improvements such as adding a synonym in the backend, or adding more content to the platform.

There are generally four types of QA you’ll need to complete prior to launch:

- Browser & Device Testing

- Search Quality Testing

- UI Testing

- Analytics Testing

Browser & Device Testing

Browser testing requires you to test your Search experience on a few different browser types and devices to ensure the experience performs consistently across each browser and device that you test. We recommend using an emulator like Browserstack to easily test different browsers and devices.

Generally speaking, you should test all Search experiences on the following browsers and devices:

- Google Chrome (latest two versions)

- Firefox (latest two versions)

- Safari

- Microsoft Edge

- Android (Chrome and Firefox)

- iPhone (Safari, Chrome and Firefox)

- Tablet (Safari, Chrome and Firefox)

We suggest testing the latest two versions of Chrome and Firefox as they are some of the more commonly used browsers. For the other browsers listed above, feel free to test only on the latest version.

If you don’t have access to a tool like Browserstack, you can test the experience on each of the browsers you have downloaded on your devices.

Browser Testing Expectations

It’s important to standardize what’s being tested in each of the browsers and devices listed above. You want to ensure that the experience is functioning as expected across all browsers.

Generally, you should review all aspects of the Search experience you have turned on in the frontend, clicking on all links and features across every vertical.

You should ensure the following items are functioning properly for each browser or device you test:

- Ensure a map appears on both universal and vertical search (where applicable)

- Click all hyperlinked text to ensure CTAs are working correctly

- Pay attention to the target link behavior

- Move between vertical tabs and “View All” (universal search) Navigation

- Expand and collapse FAQs

Feature Testing Expectations

Below is a list of the individual components of a Search experience that you should QA. Depending on the experience, you may not be using all of these features.

- Map on both universal and vertical search

- Direct Answers

- Vertical Tab and “View All” Navigation

- Facets and Filter Components

- Configured No Results Behavior

- Spellcheck Component

- Location Bias Component

- Pagination Component

- Query Rules

- Configurable Sorting

- Custom Backends

- iFrame (/iframe_test) - This only applies if your client decides to move forward with the JS Snippet path. You’ll learn more about the integration options in Integration Options and the Results Page .

Search Quality Testing

When testing the search quality of your experience you will be confirming that the most relevant and appropriate information stored within the platform is being correctly returned for a given query. You’ve already learned about this in the backend Search Optimizations unit, but here is a quick refresher.

When assessing the search quality of a search experience, you’ll want to run through 20-40 queries and ensure the relevant results are being returned. We recommend identifying or creating a list of ‘top priority queries’. For example, if you were searching on a restaurant’s website these are some of the queries you might be asking: “Restaurants near me”, “How many calories are in a breakfast burrito?”, “What allergens are in a fish taco?”, etc.

In an ideal world, each query you assess will return the expected results. However, this isn’t always the case and oftentimes there are adjustments that you will want to make.

Types of Search Quality Issues

When assessing the results for any given query there are three main buckets that categorize the issue or help determine why the relevant results are not being returned.

- Knowledge Graph Issue: There is no content in the platform that addresses the query.

- Configuration Issue: Backend configuration options (e.g., searchable fields, synonyms, etc.) need to be adjusted to return the relevant entities.

- Algorithm Issue: Although the content in the platform has relevant data and the configuration is as optimized as possible, the Search algorithm is not able to correctly match or rank the content.

User Interface (UI) Testing

Once you’re comfortable with the search results, you want to make sure that those results are visually appealing. As part of the user interface QA process, you want to confirm that your experience looks consistent throughout, and that there are no visual defects. You’ll want to ensure the look and feel of the experience matches any brand guidelines and expectations such as color scheme and fonts.

Types of UI Issues

If you’re noticing an issue or something looks wrong with the frontend of your search experience, it’s likely due to one of the following issues:

- Visual: An issue with the branding or layout of visual components

- Data: Data mapping or text labels on the experience are incorrect

- Technical: Functional issues with the user interface

Analytics Testing

As you know, part of what makes Search such a valuable product is the real-time search analytics that we capture which track what a user searches and how they engage with your content. This allows you to make data-driven adjustments to the experience as you gather more information.

We track various actions that a user takes on your experience. For example, we track if a user clicks the Directions CTA for a restaurant entity or the Order Now CTA for a menu item. For a more complete list of analytics that we track on the search experience see this reference doc .

Here are a few examples:

- AUTO_COMPLETE_SELECTION

- THUMBS_UP

- THUMBS_DOWN

- ROW_EXPAND

You will want to make sure these events are firing properly before going live.

How to QA Analytics

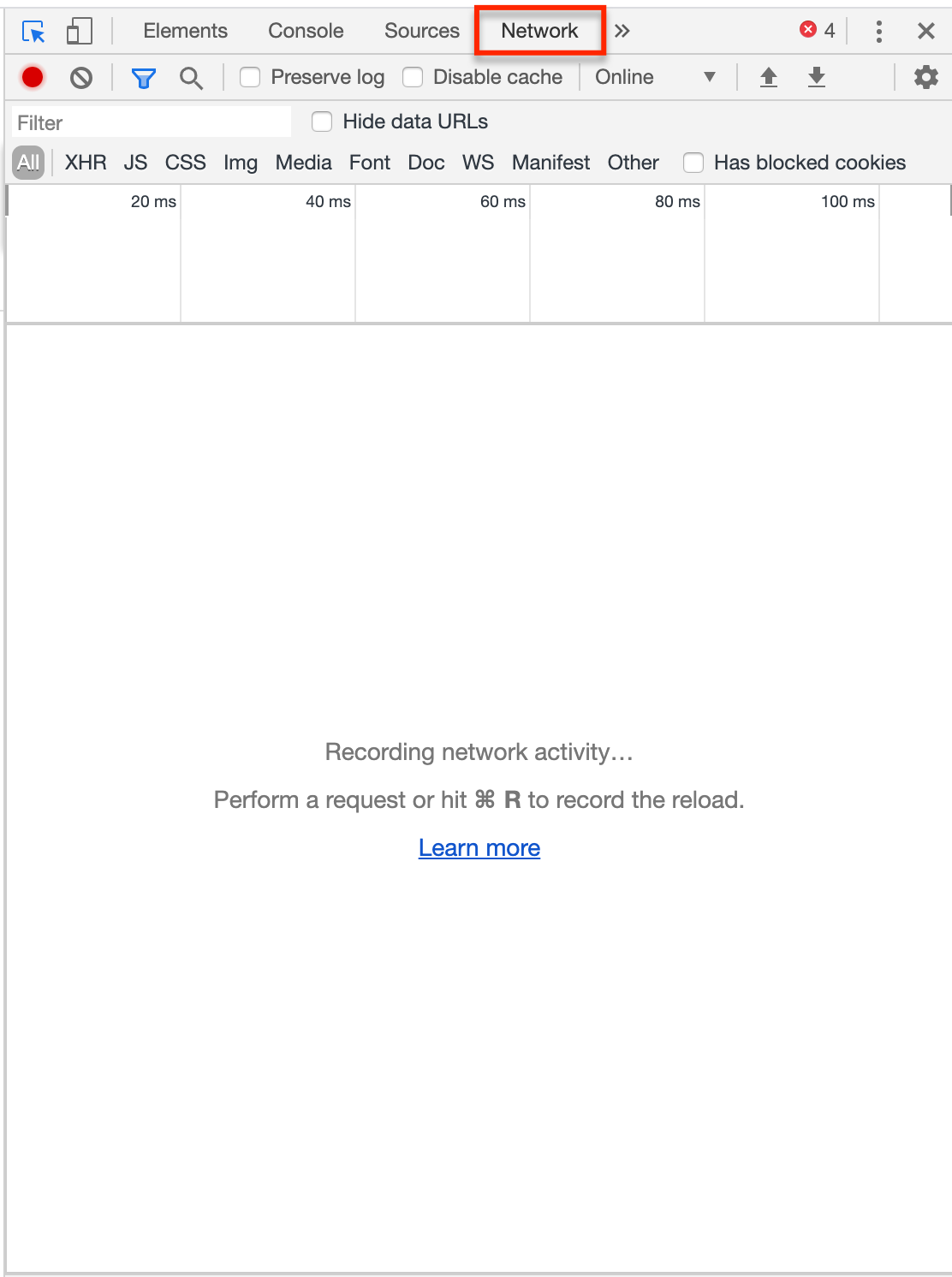

In order to ensure that the analytics events are firing correctly, you’ll need to inspect the webpage. To do this, right click anywhere on your search experience and select ‘Inspect’. This will open a pane on the right hand side of your screen. Select the Network tab from the header, and click Preserve Log so that events stay active.

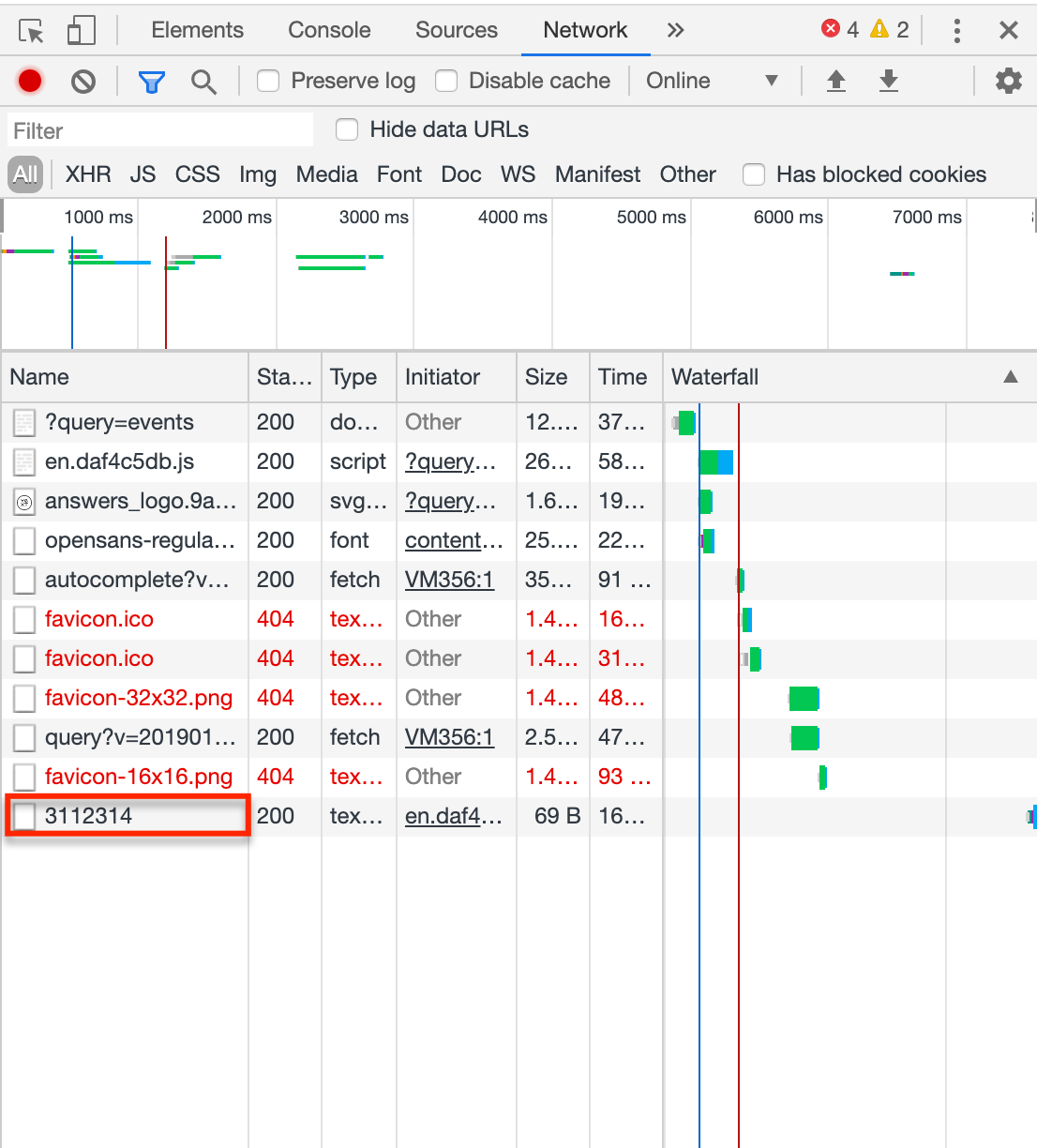

Query for something in the search bar of your Search experience. Now click one of the CTAs directly in the search experience (it’s easiest if you click to open in a new tab), and you will see that a numbered event (your account ID) gets logged in the Network console. This means an analytics event has properly fired and been logged!

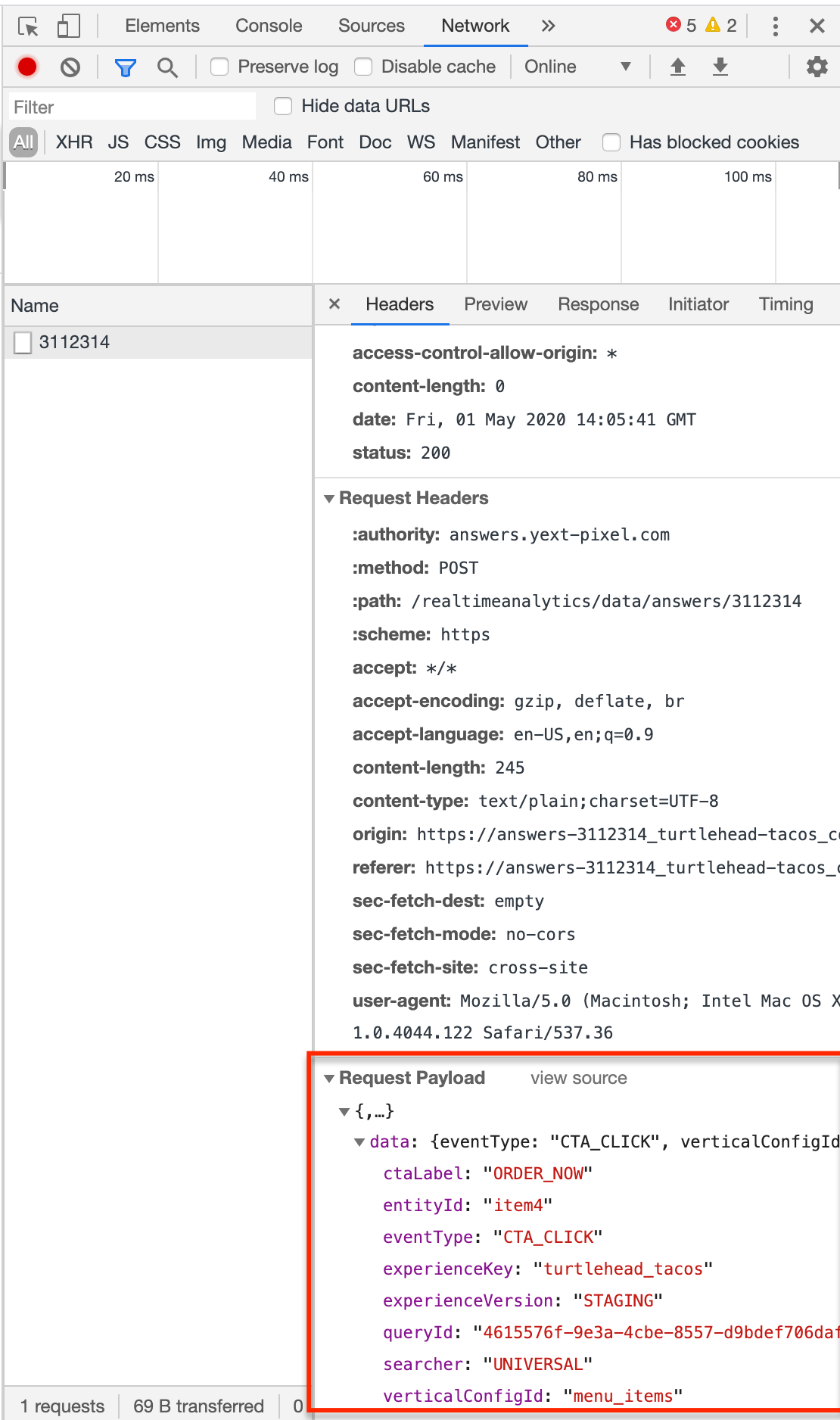

Click into the event in the Network tab. Scroll to the bottom of the window where you will see ‘Request Payload’. Click the accordion next to ‘data’ to expand the content. Confirm that the correct attributes have been passed, such as the entity ID, the event type, the vertical configuration ID, etc.

If all of that is correct, the analytics event has fired successfully!

What are the types of QA that you should complete before launching Search?

If you search for 'soda' and receive no results (despite having a menu items vertical and soda entities in Knowledge Graph), what type of QA issue might this be?

What should you do across different browsers and devices to complete this part of QA?

Wahoo - you did it! 🙌